Opportunities Report

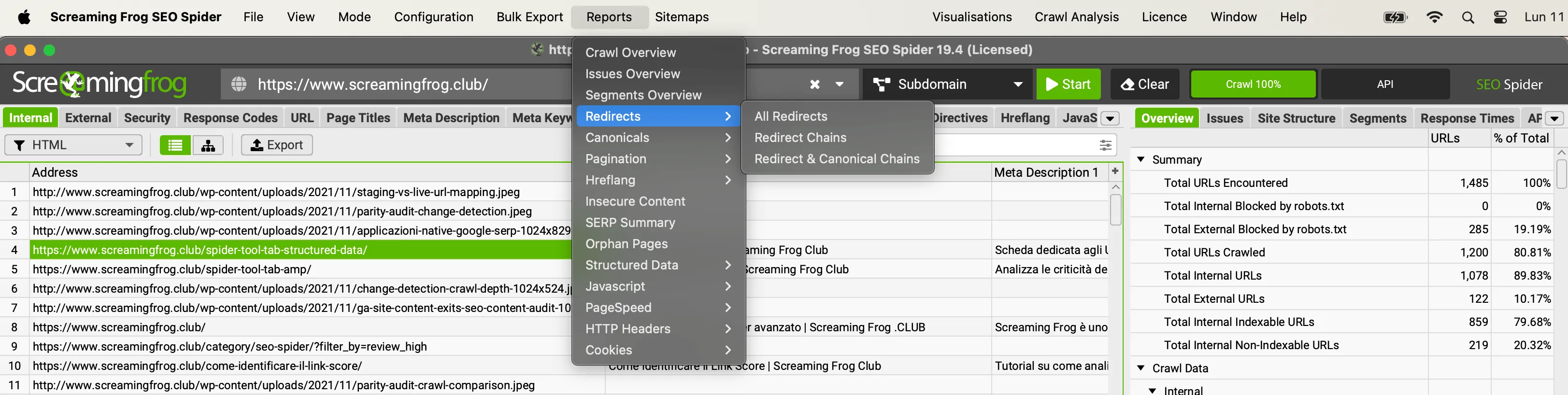

Screaming Frog has invested heavily over the past few years in updating the Spider Tool and concurrently the “Reports” available have also grown. The tool allows you to indulge in data exports by ensuring a whole series of ad hoc documents to analyze most critical issues specifically.

The list of documents are available to you in the main menu and you can save them locally with ‘.csv’, ‘.xls’,’xlsx’ format or in ‘gsheet’ by taking advantage of Google Drive. In the last case you can find them in the “Screaming Frog SEO Spider” folder in Drive.

Redirect

This report provides you with the data you find in the sidebar of the Seo Spider.

The document represents a very useful summary of the scan that can be used in the first instance for top-down supervision of each of the tabs and their respective filters.

Crawl Overview

These documents put the focus on redirects discovered during scanning by identifying the source of the link.

You currently find three different reports available to you:

- All Redirects: shows all individual redirects and reports the presence of any link chains.

- Redirect Chains: includes URLs that have 2 or more consecutive redirects creating a dangerous “Redirect Chain.”

- Redirect and Canonical Chains: identifies URLs with at least 2 redirects and “canonical” URL chains.

The “Redirect Chains” and “Redirect and Canonical Chains” documents are very useful for mapping “redirect”, “canonical” and “Loop” situations allowing you to identify the number of hops along the path and what the source URLs are.

In Spider mode (Mode > Spider) these reports show you all redirects discovered from a single hop up.

The document is divided into columns and includes:

- The “number of redirects.”

- The type of chain identified:

- HTTP Redirect,

- JavaScript Redirect,

- Canonical.

- etc.

- If there are redirection loops (Loops).

If the report has no records, it means that the Spider did not find loops or chains of redirects that need to be optimized.

‘Redirects’, ‘Redirect Chains’ and ‘Redirect & Canonical Chains’ reports you can also download them in the case of scanning in list mode (Mode > List). In this case the document will show one line for each URL provided in the list.

By checking the options “Always Follow Redirects – Always Follow Redirects” and “Always Follow Canonicals – Always Follow Canonicals” the Seo Spider will continue to scan redirects and canonicals in list mode while ignoring the scan depth. In summary, it will follow all the steps to the final destination.

Please Note: Redirection documents will be especially useful to you when migrating a website to check the correct redistribution of acquired Ranking and not waste Crawling Budget unnecessarily.

Canonical

The documents ‘Canonical Chains’ and ‘Non-Indexable Canonicals’ highlight errors and problems with canonical link elements or implementation of HTTP canonicals.

1. The “Canonical Chains” report highlights any URL that has more than 2 canonical links in a chain.

- URL A is “canonicalized” and has a “canonical URL” to URL B

- URL B is also “canonicalized” to URL C.

2. ‘Non-Indexable Canonicals‘ report highlights errors and problems with canonical links. Specifically, this report shows all canonical links that have as their destination URLs blocked by robots.txt, have status code 3XX, 4XX or 5XX or anything other than a 200 ‘OK’ response.

This report also provides data on all URLs that were discovered by the Seo Spider via the “canonical” link but have no links (internal links) from the site.

This data will be available in the ‘unlinked’ column with the label ‘true’.

Pagination

Spider Seo provides two reports dedicated to pagination:

- “Non-200 Pagination URLs”

- “Unlinked Pagination URLs”

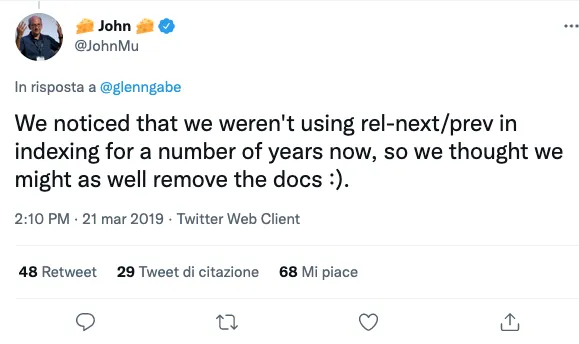

These two documents are very useful in highlighting errors and critical issues with the “rel=prev” and “rel=next” attributes of pageviews.

By consulting “Non-200 Pagination URLs” you are able to identify each URL with “rel=prev” and “rel=next” attributes that call up resources with a status code other than 200.

The “Unlinked Pagination URLs” filter will help you define all the urls that have been discovered by Spider Seo thanks to the “next/prev” attributes but have no internal link (link) to the website.

At the time of the guide, these attributes are no longer considered by Google but still remain used by other Search Engines.

Hreflang

The Hreflang tag allows Google to know the different language versions of a web page and return the most appropriate version to the surfer based on his or her browser.

Screaming Frog dedicates 7 documents for you to download each with a different focus on the attribute:

- All Hreflang URLs: this report is the most comprehensive and collects all URLs & Hreflang URLs including indications by region and language discovered during the crawl phase.

- Non-200 Hreflang URLs: through this document you are able to find out which pages include Hreflang links to pages with status code other than 200 (no response, blocked by robots.txt, 3XX, 4XX or 5XX responses).

- Unlinked Hreflang URLs: document that detects all URLs discovered by “Hreflang” linking but that do not even have a Hyperlink in the site (e.g., Orphan Pages).

- Missing Confirmation Links: through this report you are able to identify which URLs are missing “Confirmation Links.” For example, page A has a hreflang to page B and informs the Search Engine Bot that A and B are two language versions of the same content but B does not have a hreflang to page A creating inconsistency.

- Inconsistent Language Confirmation Links: this report shows confirmation pages that use inconsistent and different language codes for the same content.

- Non Canonical Confirmation Links: this document shows URLs that have hreflang with destination to “noncanonical” elements. If you decide to “canonicalize” an element you are informing the Spider that that specific content has a primary “Canonical” version so in your “hreflang “you should have included that version.

- Noindex Confirmation Links: ideal report for unearthing URLs that have the Hreflang tag to resources with “No index” attribute. Again, sending the Bot to “Non-indexable” resources creates a huge inconsistency between choosing to inhibit a piece of content and inviting it to serve that very item as the main language version.

Orphan Pages

Orphan pages represent URLs that do not receive any internal links from other pages on the Web site and cannot be reached by the crawler because they are isolated.

The “Orphan Pages” report provides a list of URLs collected through the connection between the Seo Spider and the Google Analytics API, Google Search Console (Search Analytics API) or uncovered through XML Sitemap that do not match the URLs discovered during crawling.

In summary you are served URLs that cannot be reached by the Seo Spider due to lack of links to follow or that have the “no follow” attribute.

But if they have no links why do they exist in Google Analytics or Search Console databases?

They were probably indexed with the previous version of the site or from other sources such as XML Sitemaps, or there are referrals to them.

To collect data on Orphan Pages, simply configure the API through the Seo Spider configurations.

Config > API Access > Choose APIs to connect >Connect to new Account (or connect to previously configured accounts)

At the conclusion of the scan, it is essential to use the “Crawl Analysis” to populate the relevant tab with data.

The sources available for checking orphan pages are:

- GA: The URL was discovered via the Google Analytics API.

- GSC: The URL was discovered in Google Search Console, from the Search Analytics API.

- Sitemap: the URL was discovered via XML Sitemap.

- GA & GSC & Sitemap: the URL was discovered in Google Analytics, Google Search Console & XML Sitemap.

Best practice: in order to optimize your Seo analysis with Google Analytics API, I recommend you to choose “Landing Page” as the dimension and “Organic Traffic” as the segment so that you get more relevant data and avoid login pages, e-commerce cart page etc.

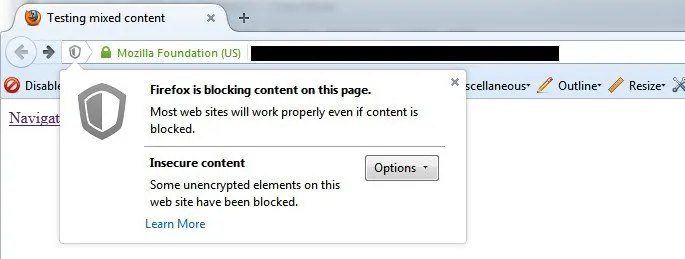

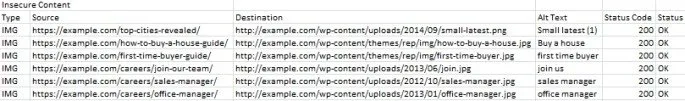

Insecure Content

This report is very useful because it alerts you to URLs with content that is deemed unsafe and allows you to avoid Browser security warnings that might turn browsers away.

The “insecure Content” document presents you with all secure URLs (HTTPS) that have insecure elements with HTTP protocol such as internal links, images, JS, CSS, SWF or external images on a CDN, social profiles, etc.

The report becomes very useful especially in the migration phase to get a complete view on potential critical issues.

Here is an example of the file where the image destinations have “http” protocol and are not trusted by the Spider Tool.

Structured Data

As you are well aware, structured data plays an informative role for the Search Engine and if managed correctly, it provides huge benefits in terms of visibility through the various features of the Search Engine.

Screaming Frog provides you with 4 reports to evaluate and optimize any critical “Structured Data” validation issues:

- Validation Errors & Warnings Summary: Aggregates structured data based on unique validation errors and discovered warnings by showing the number of URLs affected by each critical issue, with sample URLs identifying the specific issue.

- Validation Errors & Warnings: displays all structured data validation errors in a granular manner for each URL including property name (Organization etc), format (JSON-LD etc), severity of the problem (error or warning), type of validation (Google Product etc), and related message of the problem (example: /review property is required).

- Google Rich Results Features Summary: Aggregates data for “Google Rich Results Features” detected in a crawl by showing the number of URLs that have each feature.

- Google Rich Results Features: extensively maps each URL to the individual features available and shows which ones were detected for each URL.

Pagespeed

This report is based on the Page speed Insight API and provides all the main indicators on web page loading time allowing a very precise diagnosis of any blockages or causes hindering performance.

Config > API Access > Pagespeed Insights

- PageSpeed Opportunities Summary: Summarizes all unique opportunities discovered at the site, the number of URLs affected, and the average and total potential savings in terms of size and milliseconds to prioritize action.

- CSS Coverage Summary: results in potential time savings if unused code from each CSS that is loaded into the site is removed.

- JavaScript coverage summary: Highlights how much of each JS file is unused during scanning and the potential savings by eliminating unused code loaded.

HTTP Header Summary

This document returns an aggregate view of all HTTP response headers discovered during a scan. It shows each unique HTTP response header and the number of unique URLs that responded with the header.

To populate the data with “HTTP headers” you must enable the relevant function.

Config > Spider > Extraction

More specific details about URLs and headers can be found in the “HTTP Headers” tab of the lower window and via the “All HTTP Headers” document export.

Bulk Export > Web > All HTTP Headers

Alternatively, you can query HTTP headers directly in the “Internal” tab, where they are added in separate unique columns.

Cookie Summary

The “Cookie Summary” report shows an aggregated view of unique cookies discovered during a scan, considering their name, referring domain, expiration, security values, and HttpOnly.

The number of URLs on which each unique cookie was issued is also displayed.

To use this report, simply enable “cookie” extraction.

Config > Spider > Extraction

Don’t forget to also enable JavaScript rendering mode for an accurate view of cookies that are loaded on the page using JavaScript or pixel image tags.

This aggregate report is extremely useful for being GDPR privacy compliant.

You can see more details on the “Cookies” tab of the lower window and via the “All Cookies” export.

Bulk Export > Web > All Cookies

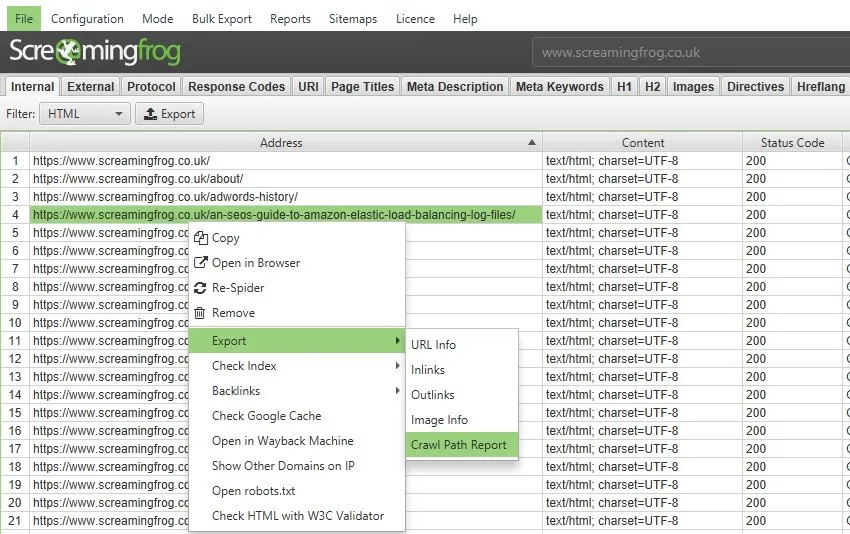

Crawl Path

The Crawl Path Report is not found in the main menu unlike the previous ones.

The document is available by right-clicking on a URL in the upper window of Seo Spider and selecting the “export” option.

This report shows the shortest path the SEO Spider followed during the crawl to discover the URL.

This information can be very useful to you for pages with a very deep “Crawl Depth” and saves you from having to view the ‘inlinks’ of many URLs to find out the original source; an application of it could be part of the diagnostics of infinite URLs caused by plugins or periodic calendars.

You have to consult the report from the bottom up. The first URL at the bottom of the ‘source’ column is the first URL scanned (with a ‘0’ level).

The ‘destination’ column, on the other hand, shows you which URLs were scanned next, and these constitute the resulting ‘source’ URLs for the next level (1) and so on, upward.

The first URL in the ‘destination’ column at the beginning of the report will be the URL of the scan path report.